|

1/19/2024 0 Comments Matlab ismember tolerance

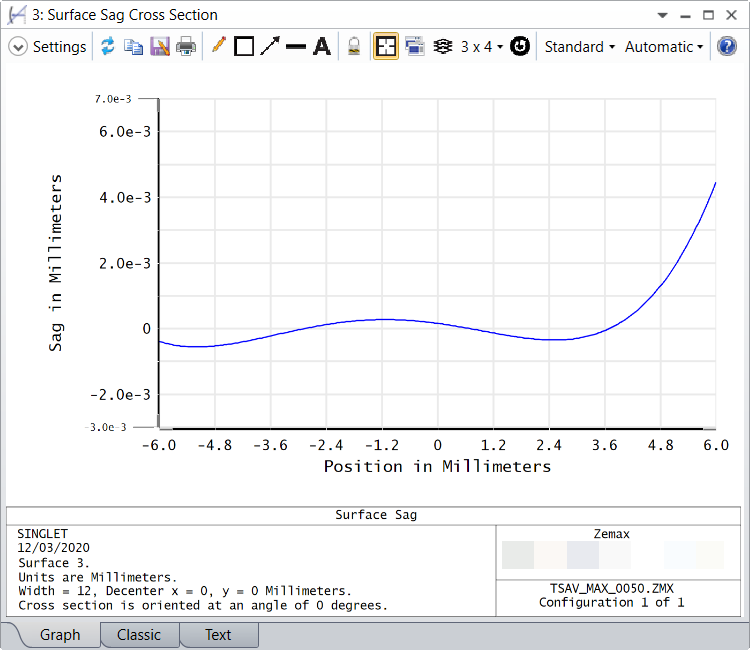

Don’t carve or fill your data before using CRS.There are, however, a few things to note: I have shown that you can use an uncertainty estimate derived from your river profile as direct input to knickpointfinder. As elevations are often overestimated, you might use directly the node-attribute list z10. Moreover, we can use the CRS-corrected profile as input to the knickpointfinder, because we have filtered the spikes from the data. That means, it enables us to derive a rather robust estimate about the uncertainty of the data. The CRS result is not affected at all by few outliers. zmin = imposemin(S,z) Īnd whatabout our uncertainty estimate based on the CRS algorithm? z90 = crs(S,z,'K',1,'tau',0.9) Let’s compute the difference between carving and filling. Plotdz(S,z) River profile with some additional errors. Let’s see how well the algorithm performs if we add some additional errors. Of course, the maximum difference is lower than the previous value obtained by minima and maxima imposition. Here is how to apply quantile carving using the quantiles 0.1 and 0.9. Why would this be preferred? Simply because we would not want that the shape of the resulting profile is predominantly determined by the errors with the largest magnitude. While minima or maxima imposition present two extremes of hydrological conditioning – carving and filling, respectively – CRS (and quantile carving, which is similar but doesn’t smooth) is a hybrid technique and returns river longitudinal profiles that run along the tau’s quantile of the data. What is constrained regularized smoothing? It is an approach to hydrologically correct DEMs based on quantile regression techniques. And the proposed technique is constrained regularized smoothing ( CRS) (Schwanghart and Scherler, 2017). Robust in this sense means: robust to outliers. Thus, we could come up with a more robust estimate of the error which is based on quantiles. This would result in only few if any knickpoints. However, this value might be very high if our profile is characterized by a few positive or negative outliers. Now we might want to take this elevation difference as tolerance value for the knickpointfinder. The maximum difference between the elevations is the maximum relative error that we estimate from the profile.

Shaded areas show the difference upstream maxima and downstream minima imposition. DEM = GRIDobj('srtm_bigtujunga30m_utm11.tif') The shaded areas in the thus generated plot (here I show a zoomed version) show the differences between these values. The following code demonstrates how we could determine these errors: Through downstream minima imposition ( imposemin) and upstream maxima imposition ( cummaxupstream). I say ‘probably’, because the upstream pixel might have an erroneously low value, too. If it is not, than the value is probably wrong (for whatever reason). The elevation of a pixel on the stream network should thus be either lower or equal to the elevation of its upstream neighbor. due to the presence of riffle-pool sequences).

At least, this is true for the water surface, yet not necessarily bed elevations (e.g. Hence, we cannot estimate the error as we would in the case of measurements in the lab.īut there is another approach: What we know for sure is that river profiles should monotonously decrease downstream. Usually, there are also no repeated measurements. Of course, there are quality measures for most common DEM products but usually these do not apply to values measured along valley bottoms, at least if you take common global DEM products such as SRTM, ASTER GDEM, etc (Schwanghart and Scherler, 2017). Now, the problem that we immediately face is that we don’t know this error. With lower tolerance values we would likely increase the false positive rate, i.e., the probability that we choose a knickpoint that is due to an artefact. More specifically, I would argue that one should choose tolerance values that are higher than the maximum expected error between the measured and the true river profile. I wrote that “the value of tol should reflect uncertainties that are inherent in longitudinal river profile data”. So, what value of ‘tol’ should we choose? At the same time, we know that river profile data often has some erroneous values and we wouldn’t want to pick knickpoints that are merely artefacts of the data. The ‘tol’-parameter determines how many knickpoints the finder will detect and we might not want to miss a knickpoint. However, I’m still confused about how to translate this sentence into code, or in another word, how can I validate my choice of tol by some criteria based on codes? You said that “the value of tol should reflect uncertainties that are inherent in longitudinal river profile data”. Kai Deng from the GFZ Potsdam commented on my previous post on knickpointfinder and asked the question about the parameter ‘tol’: Which tolerance should I choose when using knickpointfinder?

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed